AI Surveillance in Prisons: Trained on Inmate Calls, Threatening Reentry

Every prison phone call is now a confession. Securus Technologies' new AI model scans inmate communications in real time, trained on millions of recorded conversations. Families fund the surveillance. States expand the contracts. And the rehabilitation research says this will make reentry harder, not safer.

T.M. Jefferson | www.ctgpro.org

12/17/20254 min read

"Imagine pouring your heart out on a prison phone call to a loved one, only for an AI to dissect every word, trained on millions of similar conversations to predict potential crimes."

Securus Technologies, a dominant telecom provider in U.S. correctional facilities, has made this a reality. The company's new AI model is currently scanning inmate communications in real time across prisons and ICE detention centers, flagging conversations for potential criminal activity before they're even finished.

While the technology is being piloted in Texas facilities and expanding to Ohio in 2026, what's happening behind the scenes raises urgent questions about privacy, coercion, and whether America's surveillance state has found its most captive market yet.

How the System Works

Securus' AI draws from a massive dataset of recorded inmate phone calls, video visits, texts, and emails. The algorithm scans for keywords and behavioral patterns linked to crimes, human trafficking, gang activity, contraband schemes, and flags suspicious communications for human review.

The company claims the technology addresses staffing shortages, allowing facilities to monitor more communications with fewer personnel. Models are customized by state for precision, and the system promises faster interventions than traditional monitoring.

But precision comes at a cost that extends far beyond privacy.

The Money Trail

In October 2025, the FCC, under new leadership, lifted call rate caps and scrapped previous reforms designed to make prison communication affordable. The regulatory change allows companies like Securus to pass the costs of AI development, including data storage and model training, directly onto inmates and their families through higher call fees.

State governments are buying in enthusiastically. Ohio's 2026 budget includes $1 million for a pilot program to analyze all inmate calls statewide, with flagged content feeding directly to law enforcement. Texas facilities are already operational.

The financial burden falls on families who are already paying some of the highest telecommunications rates in the country just to maintain contact with incarcerated loved ones. Now they're unknowingly funding the AI that monitors those conversations.

Coercive Consent

Privacy advocates have a term for what's happening: "coercive consent."

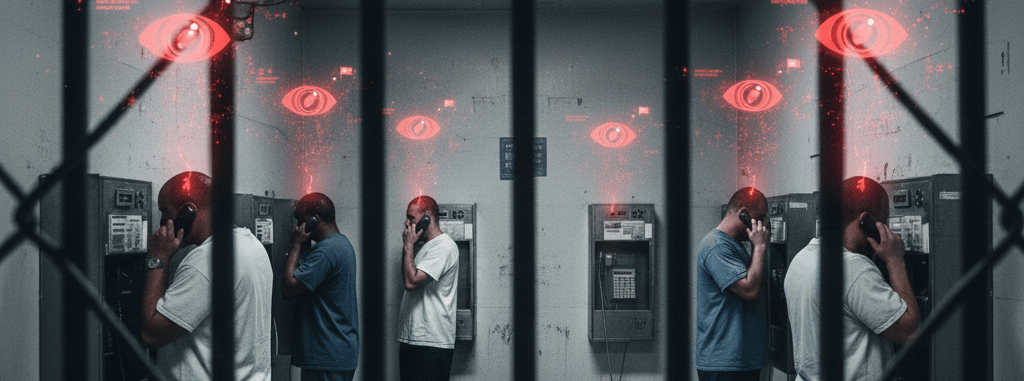

Inmates have no alternative for maintaining family contact. The phone system is a monopoly. Agreeing to be recorded isn't optional, it's the price of talking to your children, your parents, your spouse. And now those recordings aren't just monitored by human staff; they're training datasets for proprietary AI models.

No compensation. No opt-out. No alternative.

The Electronic Frontier Foundation and ACLU have raised alarm about what this means for free speech and due process. Past breaches at Securus have exposed privileged attorney-client calls, and there's no evidence that AI systems can reliably distinguish protected communications from general conversation.

The bias problem is even more troubling. AI trained on language patterns in high-surveillance environments risks amplifying existing racial and socioeconomic disparities in the criminal justice system. A false positive from an algorithm can trigger disciplinary action, extended sentences, or new criminal investigations, based on a machine's interpretation of context it doesn't understand.

The Chilling Effect

Perhaps the most insidious impact is what researchers call the "chilling effect", the way surveillance changes behavior even when nothing gets flagged.

When every word is potentially evidence, people stop speaking honestly. They avoid topics. They self-censor. They disconnect.

This matters because family contact during incarceration is one of the strongest predictors of successful reentry. Studies show that consistent communication reduces recidivism by up to 13%. Family bonds are protective factors. Isolation increases risk.

AI surveillance systematically undermines this. It introduces fear into the one space where incarcerated people are supposed to maintain human connection. The algorithm doesn't distinguish between a father telling his son he's proud of him and a gang member coordinating a drug drop. Both conversations get fed into the same model, both get analyzed for threat potential.

Rehabilitation requires vulnerability. You cannot do the hard work of self-examination, accountability, and change in an environment of total surveillance. The two are incompatible.

What Happens Next

This technology will expand. Securus operates in thousands of facilities across the country, and the business model is too profitable to abandon. Other vendors are developing competing systems. State budgets are allocating funds for pilots.

The question isn't whether AI surveillance in prisons will grow, it's whether there will be any guardrails when it does.

Right now, there aren't. No independent audits. No bias testing. No reentry impact assessments. No legal protections for attorney-client communications. No mechanism for challenging algorithmic decisions. No requirement that the technology demonstrate it actually improves safety rather than just generating data.

And no free alternative for families who simply want to talk to their loved ones without funding the machine learning model monitoring them.

What You Can Do

1. Demand transparency. Contact your state legislators and ask what surveillance contracts exist in your state's correctional facilities. Ask who profits, who pays, and whether any independent evaluation is required.

2. Support legislation capping surveillance tech. Several states are considering bills that would limit AI use in prisons, require bias audits, and mandate free communication minimums. Find out if your state is one of them.

3. Challenge the FCC decision. The October 2025 rate cap reversal can be contested through public comment and advocacy. Organizations like Worth Rises and the Campaign for Prison Phone Justice are leading this fight.

4. Fund alternatives. Programs that support family connection, reentry preparation, and trust-based education are the counterweight to surveillance infrastructure. They work. They reduce recidivism. They don't require monitoring every word.

Securus Technologies is betting that no one outside the criminal justice system cares enough about inmate privacy to push back. They're counting on the fact that incarcerated people and their families have no economic leverage, no political power, and no way to opt out.

They might be right.

But if we believe in rehabilitation, if we claim to support second chances, reentry, and reducing the cycle of incarceration, then we can't allow surveillance technology to systematically destroy the family bonds that make those things possible.

The cost of ignoring this isn't abstract. It's measured in recidivism rates, in children growing up without parents, in communities destabilized by preventable reoffending.

"This isn't a tech story. It's a choice about what kind of justice system we're building, and who profits from it."

T.M. Jefferson is the founder of the CTG Educational Program, a literature-based curriculum designed for reentry programs and incarcerated individuals. Learn more at ctgpro.org.

Change The Game Educational Program | www.ctgpro.org | Copyright (c) 2026